Building a Vision‑Guided Robot: The CARM Project

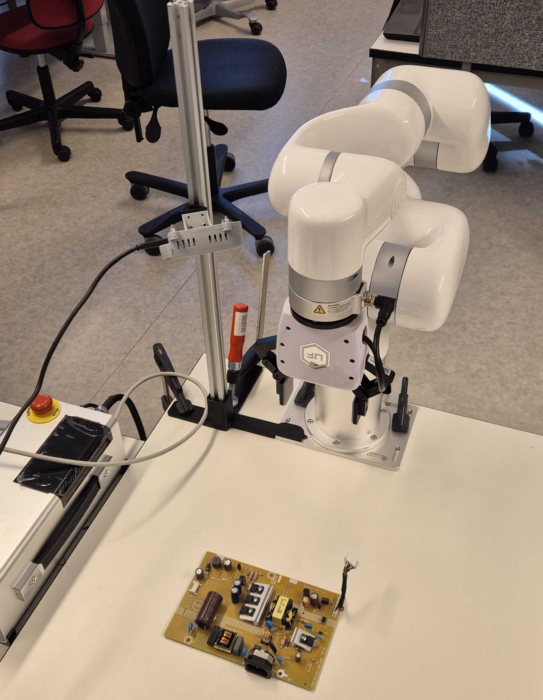

In the CARM project, our goal was to enable an xArm6 robot to detect and pick up components using a RealSense D435i camera and ROS2. The work combined computer vision, calibration, and motion planning into one integrated system. The project also built on experience from our bachelor’s thesis, where we used a manipulator to make waffles. That background proved useful, and our interest in robotic control made this project especially engaging.

The main objective was to pick components from circuit boards, focusing on capacitors, resistors, and transformers. To achieve this, the workflow consisted of camera‑based detection, AI‑based labeling, and a pick‑and‑place routine. We received circuit boards that were used to train our own YOLOv11 model, giving us a dataset tailored to the components we wanted the robot to recognize.

A crucial part of the system was establishing a reliable transformation between the camera and the robot base. Since the existing ArUco package did not function as expected, we developed our own ROS2 node for marker detection. This allowed us to publish the marker pose directly into the TF tree and complete the calibration. To improve accuracy, we collected calibration samples with minimal rotation and focused on consistent translational movements, which produced more stable results.

With calibration in place, the perception pipeline could run end‑to‑end. The system captured RGB‑D images, performed object detection using YOLOv11, reconstructed 3D points using camera intrinsics, and transformed them into the robot’s coordinate frame. These coordinates were then used to execute a sequence of approach, grasp, lift, and place motions.

To ensure safe motion planning, we integrated collision detection through MoveIt. The robot’s environment was modeled by adding collision objects such as the camera mount and support structures directly into the planning scene. MoveIt used this information to compute collision‑free Cartesian paths and reject trajectories that intersected with obstacles. This made the system significantly more robust and prevented accidental collisions during operation.

We also planned to create a digital twin of the system. The robot was successfully added to Gazebo, but we ran out of time to complete a fully functional simulation model.

Throughout the project, we encountered several practical challenges. Working through these problems gave us a deeper understanding of ROS2, the RealSense ecosystem, and the xArm control interface. It also highlighted how closely the robot and its dependencies rely on each other for stable operation.

Overall, the project provided valuable hands‑on experience with vision‑based manipulation and demonstrated how perception, planning, and motion must work together for a robot to interact reliably with its environment.