During the CARM project, the accuracy and reliability of the system was crucial. To ensure that the robot behaved as expected, the visual detection pipeline needed to be both consistent and precise. This required improving the performance of the AI model. By expanding the dataset and increasing the variation in the training images, the model became more robust and produced better predictions. Our original dataset contained 151 images, which we augmented to approximately 1400 images. This increase in data volume improved the model’s confidence by roughly 10–15%.

To further enhance reliability, we switched from a accurate YOLO model to the YOLOv11‑XL variant. Although this model required more training time, it provided an additional improvement of around 5% in detection confidence. When detecting capacitors, the model consistently achieved confidence levels above 75%, and in some cases as high as 96%.

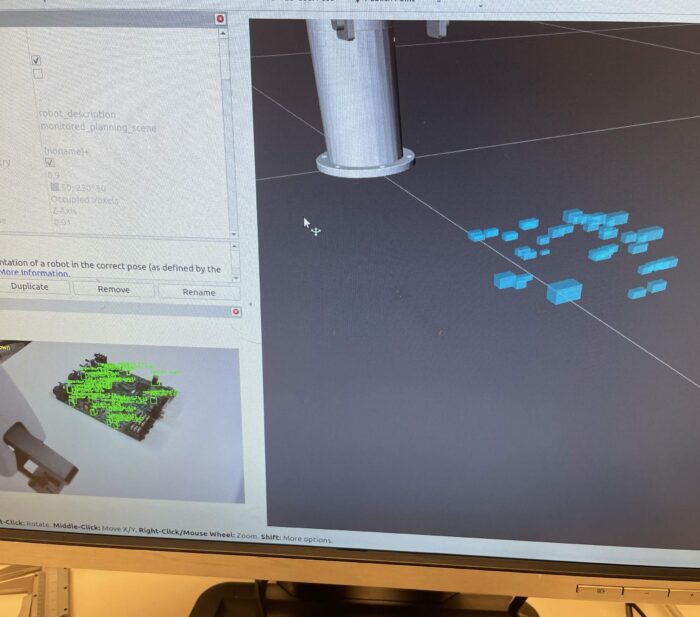

The improved model also enabled a safer and more reliable method for picking up components. Using the point cloud, we generated bounding boxes in MoveIt to represent the surrounding components on the circuit board. These bounding boxes allowed the system to check for potential collisions before moving the robot. Collisions with the target component were permitted, but collisions with neighboring components were blocked. As a result, the robot sometimes refused to pick up a component if the movement would cause a collision, providing feedback that the motion was not possible.

When sequencing between different components, the robot prioritized the one closest to the base to minimize unnecessary movement. Components could also be sorted by class ID, allowing capacitors, resistors, and transformers to be placed into their own respective piles making a simple sorting system.